I’ve recently started upgrading my projects to SourceForge’s new “forge” software Allura. Upgrading existing projects have been available for quite some time (IIRC since July), but I thought I didn’t have time to deal with it until now. From my short experience with the “new” SourceForge resulted in two short insights.

Continue reading The New SourceForge

Category: Uncategorized

Scanning Lecture Notes – Separating Colors

Continuing my journey to prefect my scanned lecture notes, I’ll be reviewing my efforts for finding a good way to threshold scanned notes to black and white. I’ve spent several days experimenting with this stuff, and I think I’ve managed to improve on the basic methods used.

In the process of experimenting, I’ve come up with what I think are the 3 main hurdles into scanning notes (or text in general) to black and white.

- Bleeding. When using both sides of the paper the ink might be “bleed” through to the other side. Even if the ink doesn’t actually pass through, it might still be visible as kind of shadow, when scanning, just like when you hold a piece of paper in front of a light and you’re able to make out the text on the other side.

- Non-black ink. Photocopying blue ink, is notoriously messy. Scanning it to b&w, also imposes challenges.

- Skipping. This is an artifact that sometimes introduced when writing with a ballpoint pen. It’s a result of inconsistent ink flow, and is more rare with more liquid inks such as rollerballs or fountain pens.

Those issue can be visualized in the first three images. These images are the originals I’ve tested the various methods with. The other images are results of the various methods, explained in this post, and should convey the difference between them.

Continue reading Scanning Lecture Notes – Separating Colors

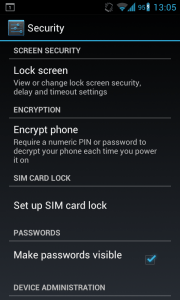

Some Thoughts About Android’s Full Disk Encryption

One of the new features touted by ICS is full-disk encryption (actually it was first available in Android 3). The first look is promising. The android developers went with dm-crypt as the underlying transparent disk encryption subsystem, which is the de-facto way to perform full-disk-encryption in Linux nowadays. This ensures both portability of the encrypted file systems and tried-and-tested implementation. The cipher itself is 128-bit AES in a ESSIV mode, and the encryption key is derived from the password using PBKDF2 (actually it’s the key that encrypts the actual encryption key, allowing fast password changes). So where do I think it went wrong?

Continue reading Some Thoughts About Android’s Full Disk Encryption

The annoying eBook vs. Paperback Pricing

I’m an avid Kindle user for more than a year. However once in a while, I come across something like this when I shopping for a new book:

As you can see, Amazon sells Kindle edition for higher price than a paperback. This book of course isn’t the only example for this ridiculous pricing method, and if one browses the Kindle store he will surely find more.

This really upsets me, as there is no honest reason to price an electronic edition higher than a real dead-tree paper edition. In both cases, the author and the publisher get their royalities and share of the profits. But the Kindle editions doesn’t have many related expenses, like storage, transportation (from the publisher to Amazon), and above all printing costs.

I don’t know who is to blame for this absurd thing, Amazon or the publisher (or even both). But the few things I know are that this bad for everyone, the customer because he pays more and Amazon/publisher as in the long run, this encourages piracy as the customer feels he’s being unfairly treated thus he will be more willing to play an unfair game as well.

Using gitg without installing

I’m working on adding spell checking support to gitg. If you intend to use gitg without installing it, a little hack is necessary. You’ll need to symlink the gitg directory (the one with the source files) as ui.

ln -s gitg ui

./configure /pathto/below/gitg

The reason is that gitg will look for Glade UI files under $(datadir)/gitg/ui and in gitg’s source the UI files are in the gitg directory and not in ui.

You can see above a screenshot of gitg with spell checking enabled. Hopefully I’ll be done with the changes soon and they will be merged to upstream quickly.

Update: There are couple more things to do in order to get gsettings’ schemas right.

mkdir glib-2.0

ln -s ../data glib-2.0/schemas

glib-compile-schemas data/

XDG_DATA_DIRS=".:/usr/share/" ./gitg/gitg

For the schemas thing see glib-compile-schemas‘ man page.

Update 2011-12-17: Jesse (gitg’s maintainer) hasn’t responded to my email regarding the new feature, so I’ve open a bug (#666406) for it. If you’re willing to try the changes yourself, you can pull them from git://github.com/guyru/gitg.git spellchecker.

Modified Variant Whitespace Template

Variant Whitespace is a nice minimalistic template by Andreas Viklund.

Andreas chose to put the sidebar above the content, which I prefer not to do. Furthermore as the sidebar was a “float” that came before the content, it caused additional inconveniences. E.g. if you had an element with clear: both it would be pushed bellow the sidebar. I’ve patched it a bit in order to fix those issues. You can find my modified version here: variant-whitespace.tar.gz

Extracting Data from Akonadi (Kontact)

In older versions of KDE, Kontact used to keep it’s data in portable formats. iCalendar files for KOrganizer and vCard for KAddressBook. But sometime ago Kontact moved to akonadi, a more sophisticated backend storage. By default (at least on my machine) Akonadi uses MySQL (with InnoDB) as the perssistent storage. I didn’t consider it thourghly when moving my data to Gnome, and I got stuck with the data.

To make things worth, somewhere along the update to KDE 4.6, I got some of the data moved to ~/.akonadi.old. Being stuck with the InnoDB tables, I tried the following solutions without much success:

- Loading the InnoDB tables to a MySQL server. Didn’t fare good, MySQL complained about weird stuff, and I gave up in search of simpler solution.

- I booted a OpenSuse virtual machine with KDE and tried loading my old data. Apparently, my

~/.akonadifolder, contained nothing interesting and Suse’s KDE 4.6 refused to load the data~/.akonadi.oldafter I renamed it.

So being upset about Akonadi I did some greping and found strings from my contacts and todo lists in the following files:

Binary file .local/share/akonadi.old/db_data/ibdata1 matches

Binary file .local/share/akonadi.old/db_data/akonadi/parttable.ibd matches

Binary file .local/share/akonadi.old/db_data/ib_logfile0 matches

I opened the files with vim, and found out the contained vCards and iCalendar blobs in them. So instead of directly storing them on the file-system, where they are easily accessible, they are stored in the DB files. I figured it would be easiest to just extract the data from the binary files. I’ve used the following script:

import sys

START_DELIM = "BEGIN:VCALENDAR"

END_DELIM = "END:VCALENDAR"

def main():

bin_data = sys.stdin.read()

vcards = []

start = bin_data.find(START_DELIM)

while start > -1:

end = bin_data.find(END_DELIM,start+1)

vcards.append(bin_data[start:end + len(END_DELIM)])

start = bin_data.find(START_DELIM, end+1)

print "\n".join(vcards)

if __name__=="__main__":

main()

It reads binary files from stdin and outputs iCalendar data that is embedded in it. If you change START_DELIM and END_DELIM to VCARD instead of VCALENDAR, it will extract the contacts’ data.

This migration, had me thinking how important it is that application’s data should be easily portable. It’s a thing, I feel not many projects have high enough on their priorities.

Importing CSV to Evolution

I’ve decided to try Gnome on a new machine that I’ve got, and as part of the move I’ve switched to Evolution (from Kontact). I had some contacts stored in a spreadsheet which I’ve tried to import as CSV to Evolution.

Apparently, unlike Kontact, Evolution won’t ask you what every column means. It would just assume that the CSV is in some weird scheme. If you try to import the CSV, it would force the scheme on you CSV even if it looks completely different. The result – a complete mess of the fields in each contact.

I didn’t find the reference for how Evolution expects its CSVs to look like, and I didn’t want to analyse that either. So finally, I’ve set up a virtual machine, loaded it with OpenSuse KDE live cd and imported the CSV into Kontact and exported it as VCard which I imported to Evolution.

I believe, that the current CSV import in Evolution, just causes user frustration, as it doesn’t act as expected.

Other weird problems I’ve encountered in Evolution which I didn’t solve yet:

- Evolution is that it gives me “Could not remove address book” when I try to to delete an existing address books. After restarting the program I’ve succeeded in deleting some of them but not all of them.

- When I imported the VCard from Kontact, the contacts appeared in every address book (except one) and also appeared magically in new address books I’ve created. The contacts in each of the address books seems to be linked together. When I’ve tried to delete them from one address book, they’ve disappeared from the rest as well.

If you know how to solve these issues I would really like to hear.

Security Vulnerabilities in the Imagin Photo Gallery

Following a friend’s request I’ve did a short security review of the Imagin photo gallery couple of weeks ago. I’ve looked at the newest version, v3 beta5, but the vulnerabilities may also apply to older versions. So here they are, from least to most important in my opinion.

Continue reading Security Vulnerabilities in the Imagin Photo Gallery

Python’s base64 Module Fails to Decode Unicode Strings

If you’ve got a base64 string as a unicode object and you try to use Python’s base64 module with altchars set, it fails with the following error:

TypeError: character mapping must return integer, None or unicode

This is pretty unhelpful error message also occurs if you try any method that indirectly use altchars. For example:

base64.urlsafe_b64decode(unicode('aass'))

base64.b64decode(unicode('aass'),'-_')

both fail while the following works:

base64.urlsafe_b64decode('aass')

base64.b64decode(unicode('aass'))

While it’s not complicated to fix it (just convert any unicode string to ascii string), it’s still annoying.